Problems check

Learn how to check all your training data and find out whether there are issues with it.

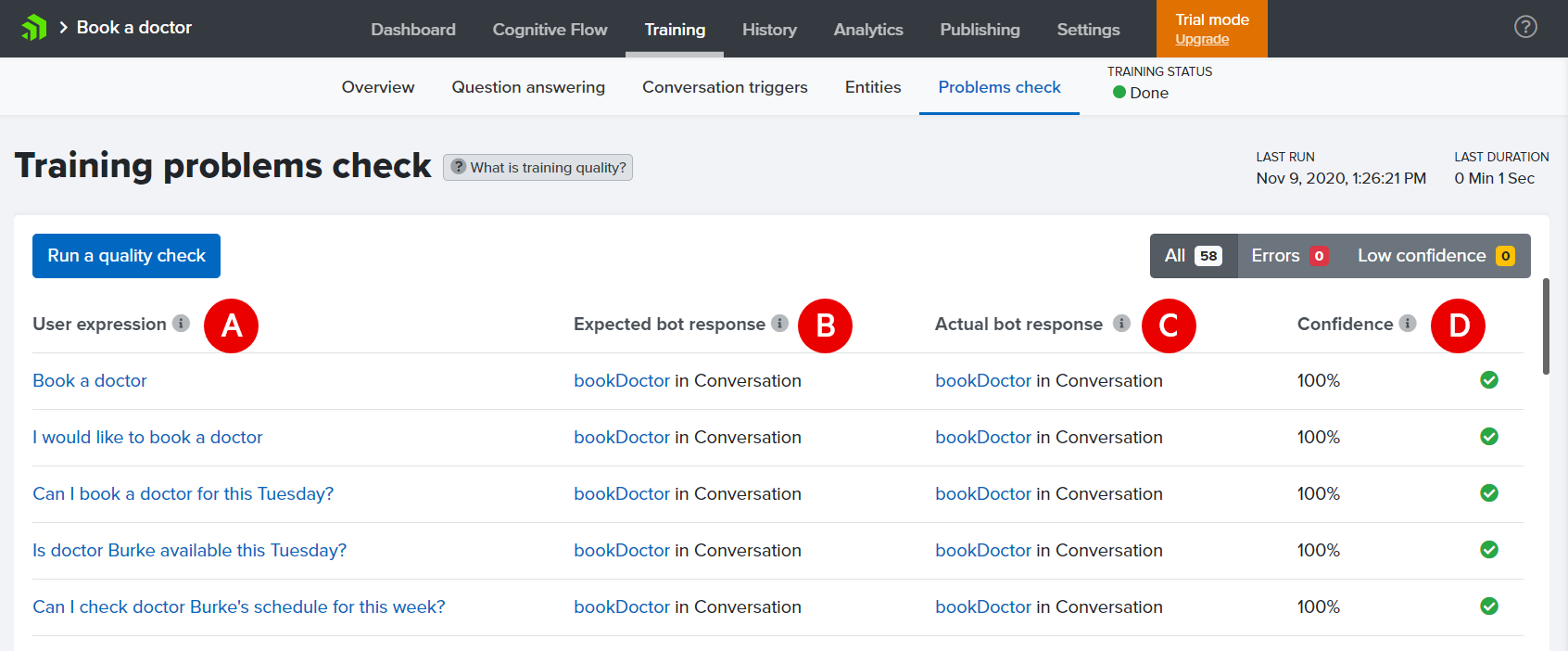

The training problems check is available in the Training section of the NativeChat portal under the Problems Check menu.

Report contents

Training data set

Marker A on the figure above is for user expressions. These are all

- Conversation trigger expression

- entity of type Trait expression

- question in Question answering

you have provided when training your bot. The check goes though all of these and then records if the bot responds with the correct conversation, entity, or answer.

Expected vs. actual response

There could be a mismatch between the expected (marker B) and actual (marker C) outcome if there are very similar expressions added as training data to two (or more) entities.

Confidence

The report also shows the confidence (marked under D) for every test utterance. Confidence is the certainty with which the bot has matched the user expression with the bot’s training data set. The highest is 100%. Matches with confidence below 65% are discarded (the bot responds to the user with the default invalid-replies message).

Detected Problems

The problematic test utterances are marked with red cross when:

- there is mismatch between expected and actual,

- the confidence is too low, or

- no match is found

To troubleshoot these issues you can do a few things:

- if possible, remove the duplicate or very similar expressions from the different entities they are found in

- highlight important words - provide more expressions for this entity value

- remove stop words - words like is, are, my, to, the, a, an, of that usually don’t bring much value

- convert similar Q&As to conversations.

These troubleshooting tactics are described in more detail in our blog post 5 Handy Tricks for Improving Your Chatbot Understanding.

To be able to better train and fine-tune your bot, we recommend you to check the blog post on What is Natural Language Processing (NLP). It is a great (and quick) introduction on how NativeChat NLP works.

Quick Understanding Testing

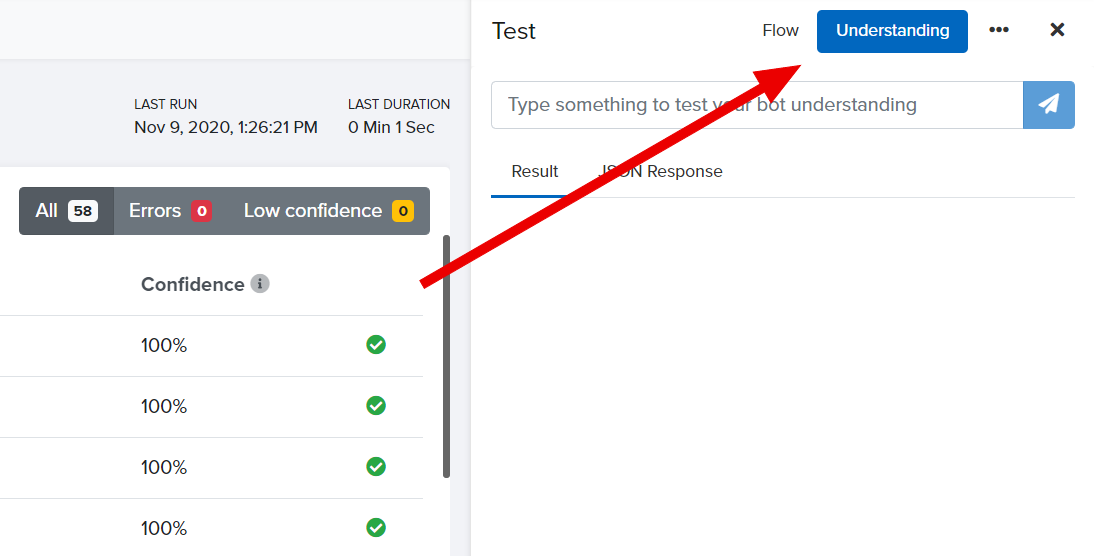

You can quickly check for problems with training by using the Understanding tab in the Test console.

You can use it to check how the bot interprets a single user expression directly from any of the Training section screens.

The test console will show the confidence for each of the detected entities and values.

Somethings missing or not clear?

Ask a question in our community forums or submit a support ticket.